An Undecidable Problem

Blue Button Feedback:

- Why does halts? have to take a function with an input. Makes it harder to see the problem.

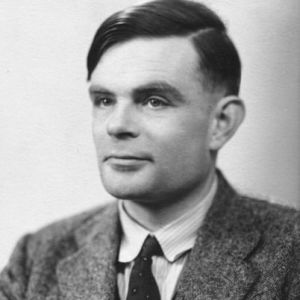

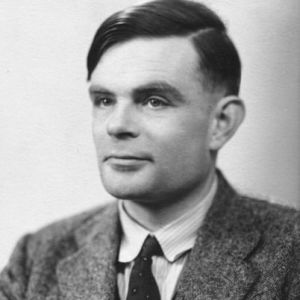

Alan Turing (1912-1954) was one of the founders of computer science. He is known for two achievements. First, he made a massive contribution to winning World War II, by inventing a mathematical theory and corresponding computer hardware to break the encoded messages generated by the Germans.

Second, together with his colleague Alonzo Church, Turing was a founder of theoretical computer science: proving how computers must work regardless of future technology. He proved that there are computations that can never be done, no matter how big and fast computers get.

Second, together with his colleague Alonzo Church, Turing was a founder of theoretical computer science: proving how computers must work regardless of future technology. He proved that there are computations that can never be done, no matter how big and fast computers get.

When Turing did this work, there were no programmable computers; people had to rewire physical machines to solve each new problem. With today's programming languages, we can see and understand the essence of Turing's proof much more easily.

The Halting Theorem

By this point in the course, you've experienced the frustration of debugging a program. Wouldn't it be great if there were a general-purpose debugging program that could read any code and find all the bugs? Alan Turing proved that this can't be done.

Turing used a proof by contradiction, just like Alphie, Betsy, and Gamal on the previous page. Turing assumed he could write a function to find all infinite loops in a program (situations in which a function runs forever without reporting a value). Then, he used that function in a program and found a contradiction (a logical incompatibility), which proved that his assumption was wrong—no such program can exist.

-

Consider this

halts? function. It takes two inputs, a function and an input value for that function, and it reports true if the function would report a value (and halt) or false if the function would run forever.

For example, the function round will not run forever when given the input 7.5; it will report 8. So, halts (round) (7.5) will report true because round (7.5) will eventually halt.

Note that halts? itself can't run forever. We are assuming that it always works, reporting an answer in a finite amount of time.

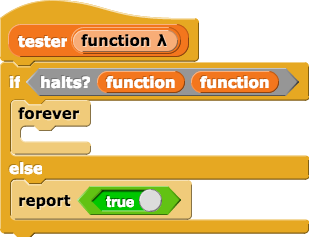

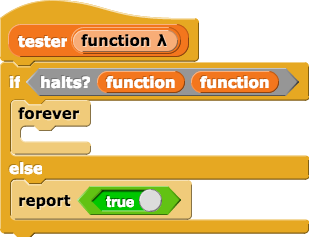

Then, Turing defined a function like this:

-

Review this

tester function closely.

- Notice that the

forever block creates an infinite loop. If the tester code ends up in this part of the if statement, it will never report anything.

-

Look at the

halts? block in the definition. Turing is asking what happens if we call some function with itself as its input (like round(round())).

This is similar to Betsy making a statement about "this statement" (namely, "this statement is false"). This is easy to do in Snap! because it allows functions as inputs to other functions, as you saw in Unit 3. It wasn't easy for Turing, who had to invent the idea of a computer program that can itself be represented as data inside the computer.

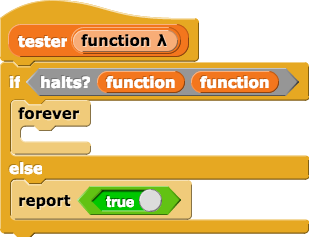

Turing then asked a question that makes the situation exactly like what Betsy said; he calls tester on itself:

Now, the if statement will ask if tester will halt (i.e., not run forever) if it's called with tester as input.

-

Consider the two possibilities for the

Consider the two possibilities for the if statement inside tester:

- Suppose

halts? reports true. This would tell us that tester(tester) succeeds at reporting a value (it halts). Then tester takes the first branch of the if, and so tester loops forever (it doesn't halt).

- Now suppose instead that

halts? reports false. This would tell us that tester(tester) loops forever (it doesn't halt). In that case, tester takes the else branch, and reports true (it does halt).

This contradiction means that the assumption that it's possible write halts? has to be wrong. Turing's "halting problem" has been proven to be impossible, and because of this proof, nobody tries to write a perfect infinite loop checker.

Turing used this example to prove that there are functions that can't be computed at all. And this isn't just a claim about the particular computers that existed in Turing's time, or today. Even with advances in quantum computing, we still won't be able to solve the halting problem.

Second, together with his colleague Alonzo Church, Turing was a founder of theoretical computer science: proving how computers must work regardless of future technology. He proved that there are computations that can never be done, no matter how big and fast computers get.

Second, together with his colleague Alonzo Church, Turing was a founder of theoretical computer science: proving how computers must work regardless of future technology. He proved that there are computations that can never be done, no matter how big and fast computers get.